I want to start with the uncomfortable question: is it actually true that any of this works?

Walking around a hospital, the most tangible field is surgery. Anatomical resolution of pathology. Remove the defect, job done - pathologies exist in the human body until they don’t. Generative processes are by far the most intractable, and bodies are generative things. So nipping it in the bud - i.e. anatomical problem-solving - is the most effective approach available, because if it weren’t, the locus of disease would persist by definition. Well-defined diseases whose chronobiology is evident and whose future pathology is predictable should, in principle, be solvable. Modelling the generative process is most of the work.

The mind is the ultimate generative process. The interventions I’ve actually seen produce substantial change are anatomical perturbation (interventional psychiatry - ECT, DBS), widespread pharmacological change (clozapine, lithium), or sustained intensive attempts to gain leverage over the generative process itself (self-inquiry, meditation, psychedelics). Work where the generative process has not been identified is - let me correct that - not futile, but misdiagnosis. Pushing on the lever in some funky, disabling way.

That was where I started. It didn’t survive contact with itself.

The clean framework breaks

The instinct to put surgery on top of the hierarchy is partly correct but it gives you a false comfort. The reason surgery feels different from everything else is that some pathologies have a sufficiently isolated bottleneck - a clot, a stenosis, a tumour mass, a meniscal tear - that removing one node collapses the whole problem. That isn’t a different category of intervention. It’s the limit case of dynamic intervention, where the dynamics happen to have a removable single point of failure.

Body medicine is dynamic-computational too. Diabetes is a glucose control loop failure. Hypertension is baroreceptor and RAAS gain pathology. Heart failure is neurohumoral runaway. Cancer is regulatory network defection. The cleanest counter-evidence to the “anatomical wins” framing is the sham-surgery literature: arthroscopic debridement for knee OA, partial meniscectomy for degenerative tears, vertebroplasty - all matched sham in proper RCTs. When the anatomical lesion isn’t actually generating the symptom, cutting it doesn’t help. So surgery wins precisely when the anatomical framing is correct, which means anatomical thinking can itself be the misdiagnosis.

Once you stop pretending surgery is special, the question becomes: how do you intervene in dynamic computational systems generally?

How you actually move dynamic systems

Six modes span almost everything we do, body and mind.

Substrate substitution. Replace what the system can’t generate. Insulin, levodopa, levothyroxine, factor VIII. Clean when the missing variable is well-characterised and the rest of the loop is intact. Fails progressively as downstream loops adapt.

Parameter modulation. Don’t remove components, change rate constants. Beta-blockers, SSRIs, ACE inhibitors, statins. Quadruple therapy in HFrEF is parameter tuning of multiple neurohumoral loops, and it works dramatically - mortality reductions stack to ~60% - without anyone ever fixing the heart anatomically. This is the dominant mode in chronic disease and the one we underrate culturally because it doesn’t have a clean narrative.

Set-point reprogramming. Bariatric surgery looks anatomical but works through GLP-1, PYY, ghrelin, bile-acid changes that move the body’s defended weight set-point downward. Diet doesn’t move the set-point; the system fights you back to it. GLP-1 agonists are doing similar work pharmacologically. The implication for psychiatry is direct: most psychiatric set-points (mood baseline, anxiety reactivity, default mode activity) are defended in the same way, which is why most interventions regress.

Attractor perturbation plus endogenous reconfiguration. This is the interesting one for minds. ECT, ketamine, psilocybin, MDMA-assisted therapy - none of them fix anything directly. They destabilise the current attractor and let the system re-settle, ideally with concurrent input shaping where it lands. The body version is hormesis: exercise, cold, fasting - small destabilisations the system over-corrects from.

Pathological-loop ablation. RFA for AF, DBS for PD/OCD/depression, vagotomy historically, cingulotomy. Looks anatomical but the target is a dynamic loop, not a lesion.

Long-horizon policy learning. Physiotherapy, CBT, exposure, meditation. Sustained training signal updating priors, motor policies, attentional habits. Slow, but the change is intrinsic to the system rather than imposed. The only modality that actually moves the generative process itself rather than working around it. Probably why the contemplative-practice intuition tracks.

The unifying principle: you don’t fix dynamic computational systems. You nudge them toward better attractors and let self-organisation do the work. The art is matching the perturbation timescale and granularity to the pathology’s. CML had a single molecular bottleneck, so a kinase inhibitor works. Depression doesn’t, so the effective interventions are either landscape-flattening (psychedelics, ECT) or slow policy retraining (psychotherapy, meditation), and the worst-performing interventions are the ones that pretend depression has a bottleneck - any single neurotransmitter story.

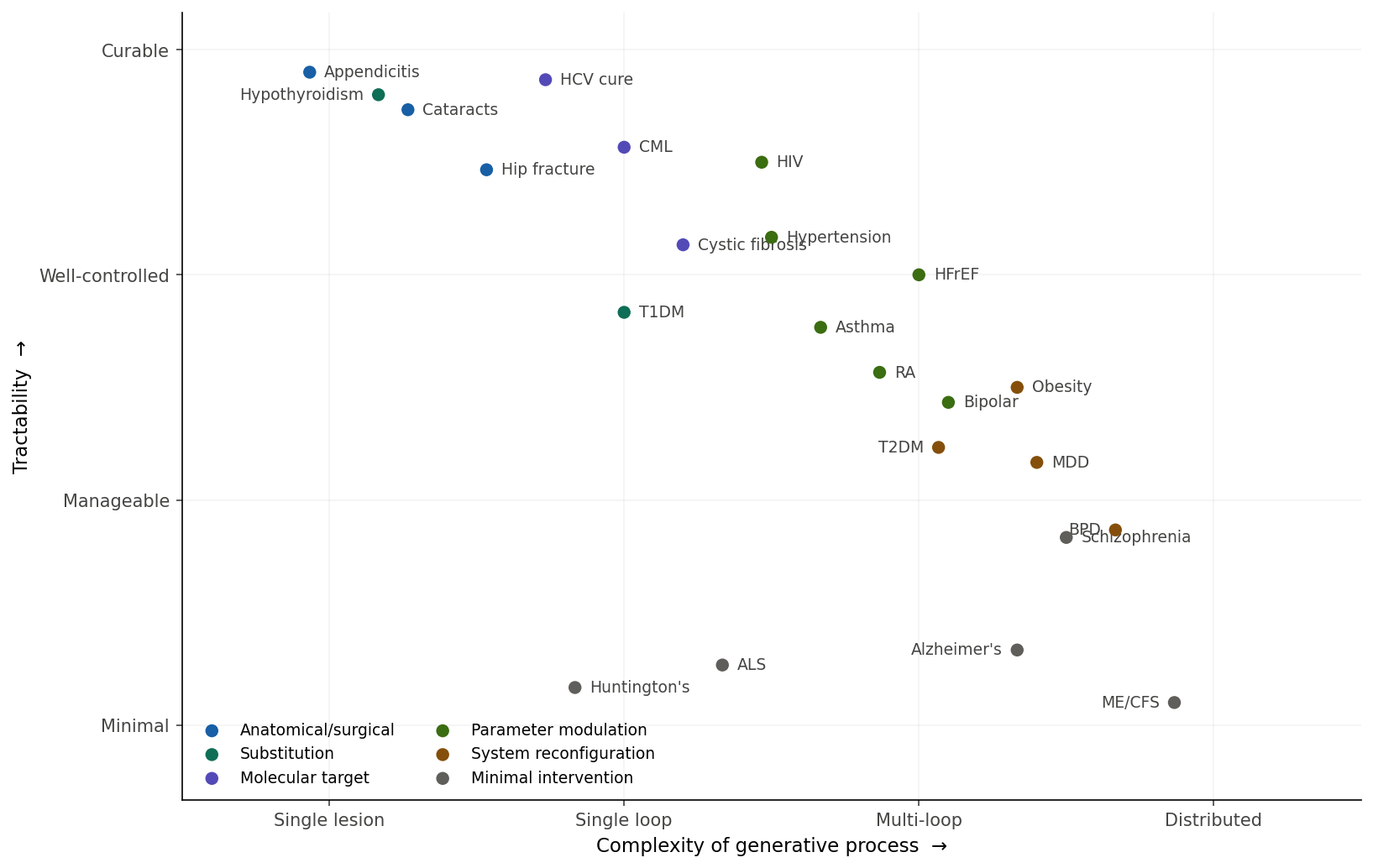

Plotted out, the territory of medicine looks roughly like this:

The diagonal is the dominant trend - complexity penalises tractability - but the interesting story is in the outliers. HFrEF and HIV sit upper-middle: highly complex pathologies, near-normal life expectancy, neither solved by finding “the cause.” Both are managed by simultaneous parameter modulation across multiple loops. Huntington’s sits bottom-left: low complexity, low tractability. Single gene, fully sequenced, fully understood, and we have nothing - knowing the generative process doesn’t help if you can’t intervene at the right scale. The bottom-right swamp is where most of psychiatry, neurodegeneration, chronic pain, and functional disorders live. The unifying feature isn’t that they’re “mental”; it’s that the generative process is distributed across many loops without a clear bottleneck.

Distributed medicine

If most disease is dynamic, most effective intervention is set-point shifting, parameter modulation, or attractor perturbation. Which means most of medicine should look like a holistic restructuring of the patient’s life around the identified generative process, with episodic high-leverage perturbations layered on top. The Diabetes Prevention Program. Cardiac rehab. Ornish’s coronary regression program. SMILES dietary intervention for depression. The data is there.

The reason this isn’t the dominant care model is adherence collapse. The intervention is the sustained behaviour change, and behaviour change is the actual hard problem. Set-points are defended. Diet-and-exercise loses to bariatric long-term because diet doesn’t move the set-point.

This is also where the framework gets dangerous. “Holistic” is so permissive that anything can be slotted in - wellness clinics with juice bars are also “holistic life restructuring.” The discriminating question is whether the lifestyle change actually nudges an identifiable generative process: sleep architecture for delirium, inflammatory load for depression, vagal tone for anxiety, glycaemic variability for cognitive symptoms. When you can name the loop and measure the modulation, holistic restructuring is just precision dynamic-systems intervention. When you can’t, it’s vibes. Unfalsifiable medicine reliably underperforms even mediocre falsifiable medicine.

So perfect medicine isn’t centralised at heroic interventions. It’s a distributed control system with good sensors, layered actuators, and a long time horizon. The unit of care isn’t the encounter. It’s the trajectory.

Was that just confirmation bias?

Worth checking. I want to claim measurement is the bottleneck for moving distributed pathologies upward, and I should ask whether that’s actually true or whether I’m pattern-matching to my priors.

The strong version is wrong. The history of medicine is full of treatments that ran ahead of measurement, often for decades. Penicillin was an empirical mould-versus-bacteria screen. Lithium was a control salt in a urate-toxicity experiment - Cade was testing the wrong hypothesis and got lucky. Chlorpromazine, imipramine, the MAOIs - all serendipitous. The dopamine and monoamine theories came after the drugs, not before. Aspirin was clinical for 70 years before Vane characterised COX. Smallpox vaccination preceded any immunology. Warfarin came from cattle dying on sweet clover hay. Many cytotoxic chemotherapies were discovered by accident.

The defensible version: discovery often precedes measurement; optimisation and population-scale deployment usually require it. You can give penicillin without serum levels but you can’t run vancomycin without troughs. The tighter version that holds in psychiatry specifically: the diseases where serendipity gave us a first effective drug and no further fundamental progress are the ones where measurement never caught up. Lithium 1949, no successor. Chlorpromazine 1952 - clozapine is the only meaningfully better antipsychotic. Imipramine 1957 - SSRIs aren’t really better, just safer. The pharmacopoeia plateaued because we never built the assays needed to design second-generation drugs intelligently.

So measurement isn’t the cause of breakthrough. It’s strongly correlated with continued progress past the first lucky compound.

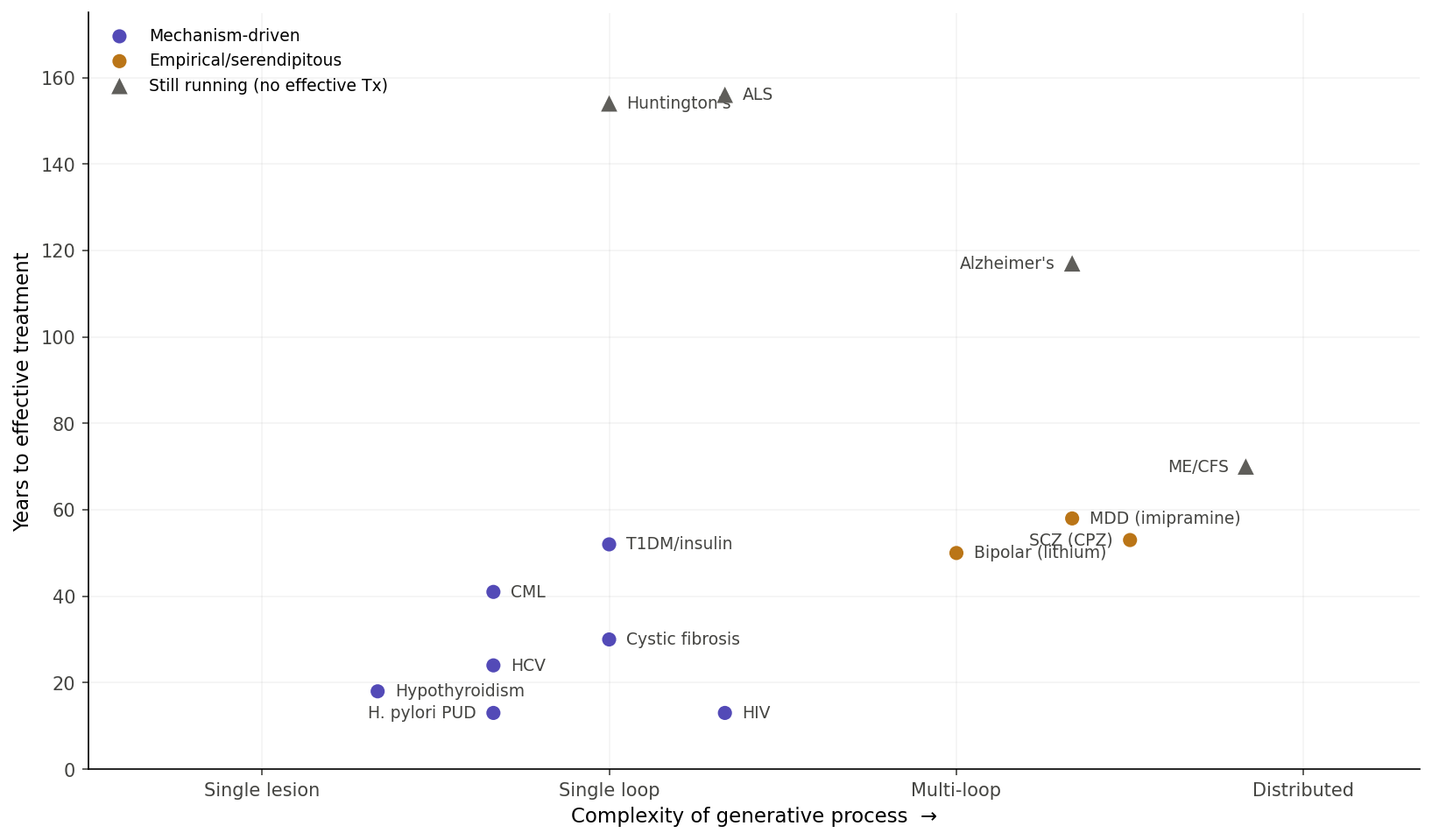

Time-to-solve, by regime

Plotted against complexity, three regimes appear and they don’t form a smooth diagonal.

Mechanism-driven solutions, once the right molecular target is identified, arrive in 13–50 years almost regardless of complexity. HIV from virus identification to HAART: 13 years. H. pylori to triple therapy: 13 years. HCV to DAA: 24. Hypothyroidism from Gull’s myxedema description to Murray’s thyroid extract: 18. Even high-complexity pathologies get there fast when the right kind of handle exists.

Empirical/serendipitous solutions for psychiatric conditions arrive at ~50–60 years from clinical recognition and then plateau. Bipolar (Kraepelin to Cade): 50 years. Schizophrenia (Kraepelin to chlorpromazine): 53. Depression (modern characterisation to imipramine): ~58. Then nothing fundamentally better.

Stuck diseases sit at 70–156 years and counting. Huntington’s: target known for 30+ years, no actionable intervention. Alzheimer’s: 117 years from description, lecanemab is modest at best. ME/CFS: no consensus mechanism. Three different failure modes - known target with no actionable handle, contested mechanism, no agreed mechanism at all.

Complexity matters less than whether the right kind of handle exists.

The in silico bet

If measurement isn’t the only path forward - and it might be the slow path - the alternative is computational. Build generative models of the disease, perturb them in silico, design interventions before testing in humans. The track record is mixed but real. The UVA/Padova T1DM simulator was approved by the FDA as a substitute for animal studies in artificial pancreas algorithm testing - one of very few in silico models with regulatory acceptance. The Virtual Epileptic Patient was used to plan epilepsy surgery in a clinical trial. AlphaFold and molecular dynamics genuinely accelerated structure-based drug design. Cardiac action potential models drive arrhythmia screening. Adaptive therapy in metastatic prostate cancer is designed using tumour evolution models.

Computational psychiatry hasn’t done this yet. Predictive coding accounts of psychosis describe positive symptoms beautifully but haven’t generated novel treatments. Reinforcement-learning models of depression similar. Most psychiatric models are post-hoc fits - they explain why the data looks how it does without predicting what intervention works. The brain is too high-dimensional for current approaches.

The honest limit: in silico models still need ground truth to calibrate. You don’t escape measurement; you shift its role from the answer to a constraint on simulation. The bet is that for distributed dynamic pathologies, generative simulation might be a better leverage point than direct measurement, because the variables that actually matter - attractor structure, gain, criticality, coupling, predictive precision - aren’t directly measurable but are simulatable from sparser data. Measurement-light, not measurement-free.

It’s a real bet, not a safe one. But the alternative - wait for someone to invent a viral-load-equivalent for depression - has been the implicit strategy for 70 years and produced the plateau.

Ophthalmology as the existence proof

The cleanest natural experiment is ophthalmology. Four “easy mode” features simultaneously, and basically nothing else in medicine has all of them. Optical accessibility - the eye is the only organ you can image to micron resolution non-invasively, and OCT gives histology-grade resolution of retinal layers without touching the patient. Direct functional readout - visual acuity is one of the cleanest outcome measures in medicine. Direct route of administration - topical, intravitreal, intracameral, no first-pass metabolism, no systemic side-effect ceiling. Localised conserved anatomy - eyes are remarkably similar between people.

The exemplars line up. Cataract surgery is probably the most successful operation ever performed at scale - anatomical resolution. Anti-VEGF for wet AMD turned a blindness diagnosis into a managed condition via monthly injection - pure VEGF-loop modulation, designed because we could see the leaking vessels. Glaucoma is the parameter-modulation paradigm: measure IOP, prostaglandin analogue, re-measure, titrate.

The retina is brain tissue. Embryologically, histologically, functionally. What ophthalmology has achieved is roughly what neurology and psychiatry could achieve if they had equivalent measurement and delivery access. The eye is the only part of the brain we can see directly, and it’s also the only part we treat well. That isn’t coincidence. It’s most of the causal story.

Where this leaves things

The gap between ophthalmology and psychiatry is essentially the gap between “we can image and inject the loops we’re modulating” and “we cannot.” Closing that gap is what actually matters for the bottom-right of the disease map. Two paths exist.

The slow path is biological - wait for the right biomarker, the right imaging modality, the right invasive technique. This has been the implicit strategy for the last 70 years and produced the plateau.

The faster path is computational - build calibrated generative models of the dynamics, use them to identify which sparse high-fidelity measurements would maximally constrain the model, then use the model to design layered interventions before testing in humans.

Neither path escapes the requirement to know what loop you’re modulating. Both are bets on different parts of what “knowing” means. The first bets on measuring it directly. The second bets on simulating it well enough that you don’t need to.

True medicine, then, is distributed. Perfect medicine looks less like a heroic intervention and more like an instrumented control system with the right sensors, layered actuators, and a long enough time horizon. The clinician role drifts from mechanic toward systems engineer. The unit of care is the trajectory. And the central scientific work - the actual frontier - is the construction of the instruments and models that let us see the loops we are operating on. Until you can see them, every intervention is, at best, a lucky empirical compound. Once you can, even highly complex pathologies tend to give way within a generation.

That, I think, is the more honest summary of whether any of this works. It works when you can see what you’re doing. The whole project of modern medicine - including most of what I do clinically, including most of the AI systems I’m trying to build - is upstream of that simple condition.